Over the past few months, I’ve been increasingly interested in autonomous robocars. The same intellectual curiosity that four years ago drove me into flying robots guided by GPS and accelerometers is now motivating me to build driving robots guided by computer vision and other sensors. Four years ago, it was quite challenging to send an autonomous drone or rover through an outdoor course even when relying on GPS (see the Sparkfun AVC 2014 video for some amusing bloopers). Now, with all of the progress made my the developers at APM and PX4, this is trivial. Today one could purchase a 3DR Solo for $299 at Best Buy, download a free copy of Tower, and trounce essentially all of the homebuilt masterpieces that competed in 2014.

That said, the game is not over. Vehicles navigating by GPS are blind. They cannot react to changes in their surroundings, navigate through areas where the sky is occluded, or learn from their mistakes. Transitioning from GPS- to vision-based navigation is a tremendous challenge that numerous technology companies are attacking with hundreds of engineers, but also a riddle that you or I can tinker with in our free time.

My boss, Chris Anderson, the CEO of 3DR, has begun hosting a wonderful meetup in Oakland to bring hackers together to work on this very problem of DIY autonomous robocars. Steps for getting set up are online at http://diyrobocars.com/, but the gist of the competition is that anyone can pick up a chassis for less than $100, add a RasPi camera, and begin working without any major ramp up.

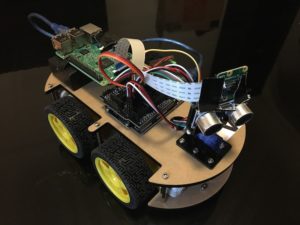

My teammate, Tori, and I elected to begin with the Elegoo robotic car kit. Included is an Arduino-based control system that interfaces with a variety of different I/O sources and drives four DC electric motors. The kit ships with sample code demonstrating how to follow a line using the included IR sensors, avoid obstacles using the servo-mounted sonar, and connect to IR and Bluetooth remote controls.

My teammate, Tori, and I elected to begin with the Elegoo robotic car kit. Included is an Arduino-based control system that interfaces with a variety of different I/O sources and drives four DC electric motors. The kit ships with sample code demonstrating how to follow a line using the included IR sensors, avoid obstacles using the servo-mounted sonar, and connect to IR and Bluetooth remote controls.

After a fair amount of debugging and tuning, our Elegoo robocar was lapping the RBG track using a modified version of the sample line tracking code. Once all was said and done, we ended up tuning the refresh rate and line tracker potentiometers, debugging the turn left function, which in the example code actually cause the vehicle to reverse, and lifting the car by mounting the motors underneath the chassis.

Now that the robocar was up and running, it was time to get serious. Three-point line trackers only enable very simple behavior in controlled environments, but we wanted our car to be able to navigate through any arbitrary track. To achieve this goal, we had to mount a camera. The obvious choice was the RasPi camera, which is cheap, easy to use, and very popular among makers.

The next choice was to where to run the computer vision software. True robocar purists insist on running all of the code on the moving platform. The Raspberry Pi 3 board we selected has ample compute and this is totally possible, but development is much easier on a laptop, so we decided to stream the video and work on a system with which we were more familiar. That said, this is easier said than done.

Step 1: Set up the computer

To stream video from a Pi cam to a computer, the first step is to connect both to the same wireless network and discover the IP addresses of both. The computer is easy. Simply open a terminal window and type

ifconfig

You will be rewarded with a list of all of your network I/O ports and their local addresses. For my Macbook Pro, en0 referrs to the Airport WiFi card.

en0: flags=8863<UP,BROADCAST,SMART,RUNNING,SIMPLEX,MULTICAST> mtu 1500

ether a0:99:9b:05:cb:4d

inet6 fe80::10d5:45ba:ab90:fe73%en0 prefixlen 64 secured scopeid 0x4

inet 10.1.49.106 netmask 0xfffffc00 broadcast 10.1.51.255

nd6 options=201<PERFORMNUD,DAD>

media: autoselect

status: active

Here, I can see that my computer’s private IP address is 10.1.49.106. This is the address to which I will send the Raspberry Pi video stream. If you have a different computer or would like to learn about the other ports, run

networksetup -listallhardwareports

from the terminal and look to for the WiFi device. Alternatively, you can hold “option” while clicking the WiFi menu in the upper-righthand corner and the IP address of the computer and the router will be displayed.

Step 2: Set up the Pi over ethernet

Next, I need to set up the Rasberry Pi on the network. This is far for complex then it should be and can be accomplished in one of two ways.

Method one is to connect your Pi to a monitor, keyboard, and mouse and boot up as you would a normal computer. Connect to your WiFi network using the GUI tools and run ifconfig from the Pi terminal to determine the IP address. This method is much easier but requires a ton of excess gear.

Method two involved booting up the Pi in headless mode. In an ideal world, the Pi would boot upon connection to a power source and automatically connect to the right WiFi network. Unfortunately, this requires setting up a config file ahead of time with the network name and password. Seeing as this is often impossible, I will follow a more general method.

Boot up your Pi and connect it to your computer via ethernet cable. For a Macbook Pro, you will need an ethernet to Thunderbolt adapter. After connecting, you can now scan your computer’s hardware ports again and see that the Pi appears on the Thunderbolt Ethernet, en6 in my case.

I can then SSH into the Pi using either

ssh pi@raspberrypi.local

or

ssh pi@169.254.149.98

where the IP address above is the PI’s IP address you learned from running ifconfig after plugging in the ethernet cable. Note that the default password is “raspberry”.

Now we are moving. To stream the video, we are going to use a utility called netcat, abbreviated nc in the terminal. Let’s run a simple experiment to see how this works. Type

nc -l 5555

into the terminal of the Pi, either directly or via the SSH session. The port doesn’t matter, but must not be in use by another process. Your Pi is now listening for a broadcast.

Next, type

nc 169.254.149.98 5555

into the terminal of your computer, where the IP address is that of your Pi and the port matches the port above. Your devices are now linked. Notice that you can type something into either terminal window and it will be mirrored in the other.

Now, practice using nc by switching the roles of the Pi and the computer. You can see how one can listen for the other and vice-versa. We will use this approach to stream the video.

Step 3: Stream the video

We will use a utility called raspivid to capture the feed from the camera. While the computer is listening, type

raspivid -t 999999 -o – | nc 169.254.223.33 5555

into the terminal. The “-t” option indicates raspivid will run for 999999 seconds, the “-o -” indicates the output will be piped, and the |, also called a pipe, indicates that the video out should go to netcat.

Note: this is hyphen o hyphen. Many of the guides out there incorrectly state that this symbol is some kind of dash. It is a hyphen, which is found between the zero and the equal sign on my keyboard.

After executing this command, you’ll notice your computer starts going wild. This is because we are sending video information to a terminal window and need some program to interpret the signal.

Again, we will take advantage of the pipe to forward the video signal to a program that can interpret and display it. This time, set your computer to listen with netcat and pipe the signal to mplayer using the command below.

nc -l 5555 | mplayer -fps 31 -cache 1024 –

Note: as before, all of these horizontal lines are hyphens.

Now, when you fire up streaming on the Pi using

raspivid -t 999999 -o – | nc 169.254.223.33 5555

you will see the video feed from the camera on your display.

Step 4: Connect the Pi to your local network

However, streaming video doesn’t do all that much good when it requires an ethernet connection. The next step is to set your Pi up to automatically connect to your local network. This step, of course, is not necessary if you have a monitor, mouse, and keyboard and use the network tools available in the Raspbian GUI.

SSH into the Pi over ethernet and type

sudo nano /etc/wpa_supplicant/wpa_supplicant.conf

into the terminal. This will open a network config file with write access. You will see any network you have connected to listed in the format below.

ctrl_interface=DIR=/var/run/wpa_supplicant GROUP=netdev

update_config=1

country=US

network={

ssid=”network_name”

psk=”network_password”

key_mgmt=WPA-PSK

}

Simply add your network SSID and password as another network and reboot the Pi using sudo reboot. You Pi will then automatically connect to your local network and all of the goodies above are available wirelessly.

Verify you are on the network by running ifconfig from the SSH shell. You will see you now have a WiFi IP address on the wlan0 port. SSH into the Pi using that IP address (ssh pi@10.1.49.159) and unplug the ethernet cable.

You can now stream video from the Pi wirelessly by replacing the ethernet IP address you used before with the WiFi one and are ready to begin running your CV algorithms on your laptop.

One last pro trick: if you don’t have an ethernet cable, have already set up the WiFi config file, and just need to know the Raspberry Pi IP address to SSH in, run

nmap YOUR_IP_ADDRESS/24

Terminal will return a list of all of the devices connected to the network. Simply find the Pi, SSH on in, and follow the instructions above.

If this was one, take a look at my similar guide for streaming images from Solo to the web.